Artificial intelligence has moved from experimental proofs-of-concept to operational deployment across the pharmaceutical manufacturing value chain. As of 2026, the global AI in pharmaceutical market is valued close to USD 4.5 billion, with manufacturing-specific applications capturing significant portion of this revenue.

For small and mid-size pharmaceutical companies, the transition to AI is no longer optional. These organizations face the same regulatory compliance obligations as large enterprises but operate with smaller budgets and leaner IT teams. Success in this landscape requires a shift from chasing algorithms to building foundational “Manufacturing Intelligence.”

The ROI Challenge: Why Only 22% of Leaders Scale Successfully

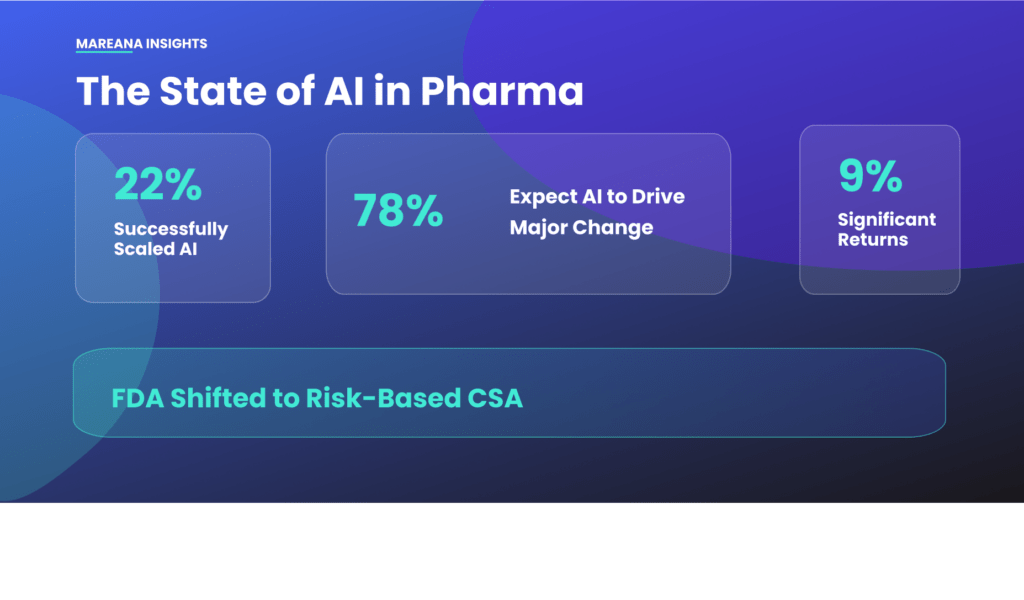

The potential for efficiency is high, yet only a small fraction of organizations realize AI ROI at scale. A 2026 Deloitte survey found that while 80% of organizations use generative AI in some capacity, only 22% of life sciences leaders have successfully scaled their AI initiatives. Just 9% reported significant financial returns.

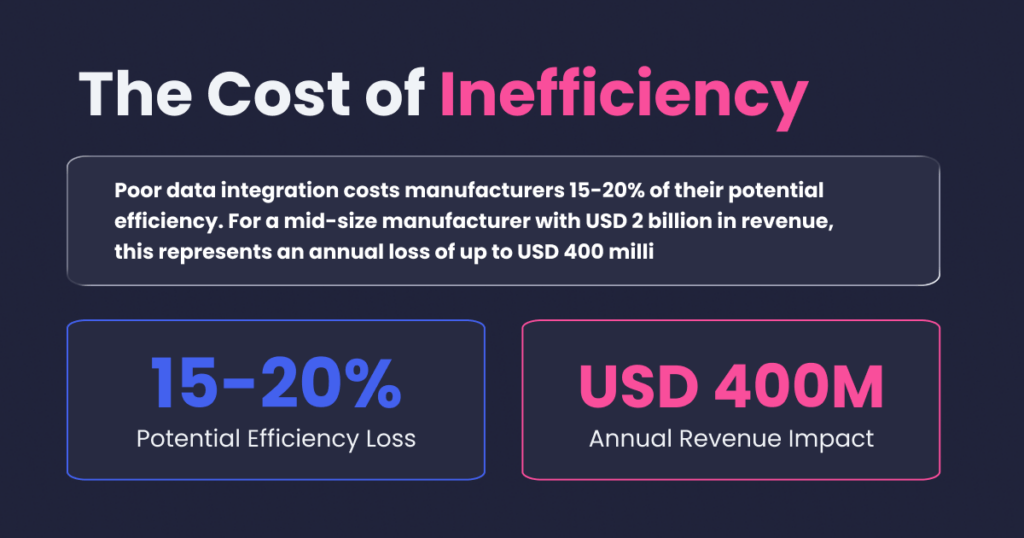

The primary barrier to scaling is the “Digital Transformation Paradox.” Companies have invested millions in digital systems like ERP and MES, yet close to 70% of their data remains “dark”—physically present but analytically inaccessible. Poor data integration costs manufacturers 15-20% of their potential efficiency. For a mid-size manufacturer with USD 2 billion in revenue, this represents an annual loss of up to USD 400 million.

The Regulatory Landscape: Converging on Governance

Regulatory bodies have moved from observation to active governance. AI systems in GMP environments now face formal requirements for validation, traceability, and human oversight.

FDA: Risk-Based Credibility: The FDA’s “Computer Software Assurance” (CSA) guidance, finalized in September 2025, replaces exhaustive scripted testing with a risk-based approach. Additionally, the January 2025 draft guidance on AI support for regulatory decision-making establishes a credibility assessment framework that governs how AI data is used in drug applications.

EU GMP: Formalized Annex 22: In Europe, the introduction of Draft EU GMP Annex 22 represents the first dedicated framework for AI in manufacturing. It mandates strict adherence to ALCOA++ principles and requires human oversight for critical GxP activities like batch release.

Global Harmonization: The January 2026 joint publication of AI Practice Guiding Principles by the FDA, EMA, and Health Canada signals a future where a single validation package might satisfy multiple jurisdictions. Regulators emphasize “explainability,” discouraging black-box models in favor of architectures that allow quality teams to understand the rationale behind a decision.

Internal Adoption and Agentic Oversight by FDA: The FDA has moved beyond regulating AI to adopting it internally. In early 2026, the agency fully deployed Project Elsa, an internal generative AI platform with agentic capabilities. The FDA uses these agents to autonomously process historical data populations, identify behavioral patterns (such as closing large numbers of CAPAs before an inspection), and flag discrepancies between disparate systems like LIMS and MES logs.

This shift toward “real-time regulatory monitoring” means the FDA can identify process drifts and data integrity failures long before a physical site visit occurs. In April 2026, the FDA issued its first Warning Letter specifically citing a manufacturer for the improper use of AI agents in quality decisions without adequate human oversight.

Challenges of Using AI in Pharma Manufacturing

The case for AI in pharmaceutical manufacturing is well established. The path to realizing it is not. Organizations that approach AI deployment without understanding the specific obstacles of a GMP-regulated, data-fragmented, risk-averse manufacturing environment consistently underestimate what the transition requires and overestimate what technology alone can deliver.

These are the challenges that separate organizations that scale AI successfully:

1. The Data Foundation Problem

The most sophisticated AI model produces unreliable outputs when the data it consumes is incomplete, inconsistent, or poorly connected. In pharmaceutical manufacturing, this is the baseline condition for most organizations.

Manufacturing data is distributed across MES, LIMS, ERP, QMS, historians, and paper records, systems that were not designed to interoperate and that use different identifiers, different timestamps, and different conventions for representing the same manufacturing concepts. Close to 70% of manufacturing data in a typical pharmaceutical facility is analytically inaccessible, physically present somewhere in the system landscape but not connected, not contextualized, and not queryable.

AI models trained or operated on this fragmented data, learn from an incomplete picture of manufacturing reality. They may identify correlations that reflect data collection artifacts rather than genuine process relationships. They may fail to detect patterns that span system boundaries. And their outputs may not be defensible to a regulatory reviewer who asks how the model’s training data was assembled and validated.

Solving the data foundation problem is a prerequisite for AI deployment, not a parallel workstream. Organizations that invest in manufacturing data connectivity through knowledge graph architecture, paper digitization, and system integration before deploying AI models consistently outperform those that attempt both simultaneously.

2. Validation and the Regulatory Compliance Burden

Every software system that performs a GxP function in pharmaceutical manufacturing requires validation. This principle extends to AI systems but applying conventional Computer System Validation approaches to AI creates a fundamental tension.

Traditional CSV was designed for deterministic software: given the same inputs, the system always produces the same outputs, and those outputs can be verified through scripted testing. Machine learning models are not deterministic in the same way. Their outputs depend on training data, model architecture, and inference context variables that change as the model is updated and as manufacturing conditions evolve.

The FDA’s Computer Software Assurance guidance, finalized in September 2025, moves toward a risk-based approach that is more compatible with AI system characteristics. But translating CSA principles into practical validation protocols for specific AI use cases requires expertise that most pharmaceutical quality and IT teams are still developing.

3. Data Integrity in AI-Generated Records

Any record generated by an AI system that supports a GxP decision – a batch release recommendation, a deviation flag, a CPV trend alert must satisfy the same ALCOA++ data integrity principles as any other GMP record: attributable, legible, contemporaneous, original, accurate, complete, consistent, enduring, and available.

This requirement creates specific design obligations for AI systems in pharmaceutical manufacturing:

- Every AI output must be traceable to its input data, with a clear audit trail connecting the output to the specific data points that generated it

- AI-generated records must be protected from unauthorized alteration with the same controls applied to other electronic GMP records under 21 CFR Part 11 and EU GMP Annex 11

- Human review and approval must be documented for any AI output that informs a quality-critical decision, the AI cannot be the final decision-maker without human confirmation in the audit trail

Organizations that deploy AI without building these data integrity controls into the system architecture create regulatory exposure that may not become apparent until an inspection.

4. The Integration Complexity of Legacy Systems

Most pharmaceutical manufacturing sites operate a technology stack assembled over decades, with validated systems from multiple vendors running on infrastructure that was not designed for modern data integration. Connecting AI platforms to this environment without disrupting validated system states is a significant technical challenge.

Every interface between an AI platform and a validated GxP system, whether through API, database connection, or file transfer requires validation in its own right. Changes to validated system configurations to enable data export may trigger revalidation of those systems. Data transformations that occur between the source system and the AI platform must be documented and controlled to ensure that the AI is working from accurate, complete representations of the source data.

For organizations with limited IT resources, the integration project required to feed AI systems with reliable, connected manufacturing data can consume more time and investment than the AI deployment itself.

5. Talent and Organizational Readiness

AI in pharmaceutical manufacturing requires a kind of expertise that is genuinely rare: professionals who understand data science and machine learning, who understand pharmaceutical manufacturing processes and GMP requirements, and who can communicate between these domains effectively. This “trilingual” competency; data science, life sciences, and GMP is what allows organizations to deploy AI in ways that are both technically sound and regulatorily defensible.

Most pharmaceutical companies are building this competency from a starting point where these skills exist in isolation. Data scientists join from outside the industry and do not understand validation requirements. Quality professionals understand GMP but do not have the data science background to evaluate model outputs critically. IT professionals can build integrations but cannot assess the manufacturing relevance of the data they are moving.

Closing this gap requires deliberate investment in cross-functional training, recruitment strategies that prioritize hybrid competency, and organizational structures that bring data, quality, and manufacturing functions into genuine collaboration rather than sequential handoffs.

6. Change Management in a Risk-Averse Culture

Pharmaceutical manufacturing operates in a culture shaped by regulatory consequence. Quality decisions that turn out to be wrong do not just create operational problems, they create regulatory findings, product recalls, and in the most serious cases, patient harm. This culture produces a rational conservatism about process change that can become a significant barrier to AI adoption.

Asking a quality reviewer who has performed manual batch record review for fifteen years to trust an AI system’s exception-based output requires more than a demonstration that the system works.

Organizations that manage this change successfully treat AI deployment as a change management initiative as much as a technology project, investing in stakeholder engagement, transparent communication about system limitations, and phased rollout approaches that allow users to build confidence through experience before AI systems carry full operational responsibility.

High-Value AI Use Cases in Pharma Manufacturing

Specialized AI platforms are currently solving the technical bottlenecks that traditional systems cannot.

1. Genealogy: The Backbone of Traceability and Quality Assurance

Product genealogy (or material genealogy) is the detailed history and lineage of a product throughout its manufacturing journey. It tracks everything from raw materials through intermediates to the finished product and distribution. For Quality Assurance (QA) leaders, genealogy provides the comprehensive audit trail necessary to verify that quality standards have been met.

Challenges of Traditional Genealogy

Building a complete genealogy has traditionally been a slow, error-prone process. Data fragmentation across MES, LIMS, and paper logs creates silos that make manual reconciliation difficult. Legacy relational databases struggle to track the hierarchical, branching nature of material flows. For smaller firms, these complexities often stall genealogy initiatives.

How AI Automates Genealogy Construction

Modern AI-powered platforms, such as Mareana, simplify Genealogy implementation through several key functions:

- Automated Digitization: Pharma-specific OCR engines extract data from handwritten and printed records, reducing paper digitization efforts by up to 90%.

- Knowledge Graph Architecture: Unlike relational databases, AI-driven knowledge graphs automatically map relationships between materials, equipment, and outcomes. Years of batch history can be structured in hours rather than months.

- CDMO Integration: AI can ingest data from external manufacturing partners without requiring manual standardization, creating a unified view of the full lifecycle.

2. De-Risking Technology Transfer: Closing the “Know-How” Gap

Technology transfer is the complex migration of institutional knowledge, process expertise, and tacit know-how required to scale manufacturing. The traditional “push” model—where R&D hands over a static document package to manufacturing—frequently fails because it lacks the undocumented expertise (tacit knowledge) of the development team. This gap forces the receiving site to reverse-engineer the process, leading to delays, deviations, and millions in lost revenue.

Closing the Gap with Digital Twins

Manufacturing intelligence platforms like Mareana transform fragmented data into a complete, auditable digital record.

- Dynamic Digital Twins: By utilizing the Batch Genealogy module, AI creates an end-to-end visual map of all production data. This provides the new manufacturing site with an instant “digital twin” of the process, ensuring a deep understanding of historical behavior and process parameters.

- Ensuring Process Robustness (QbD): Successful tech transfer requires a deep understanding of the “design space.” Mareana’s Smart CPV (Continuous Process Verification) module uses predictive analytics to monitor raw materials and process parameters in real time. This ensures the process remains in a “state of control” at the new site, transitioning quality control from a reactive process to a proactive one.

Transforming Sponsor-CDMO Collaboration

The industry’s reliance on Contract Development and Manufacturing Organizations (CDMOs) makes tech transfer a mission-critical partnership. Traditional manual review methods create friction when discrepancies are found late in the release cycle.

- Unified Data Environments: A digital platform allows both the sponsor and CDMO to work from a shared source of truth with the help of a unified data platform.

- Exception-Only Workflows: Mareana’s Batch Release Copilot automates the reconciliation of batch records and trends. By flagging only deviations that require human attention, it accelerates batch release cycles and builds trust through transparency. This “first-time-right” approach is essential for accelerating drug pipelines and building resilient supply chains.

Learn how leading manufacturers are closing the “know-how” gap during tech transfer.

Download the Whitepaper: De-Risk Tech Transfer and Accelerate Time to Market

3. Review by Exception (RbE): Scaling Quality Oversight

Batch record reviews are a massive operational bottleneck. They are notoriously time-consuming and prone to human error due to reviewer fatigue and the sheer volume of data involved. While systems for capturing data have evolved, batch review decisions often still rely on a manual model designed for a paper-centric world.

Traditional Batch Review Software typically digitizes the paper format without automating the review logic. These systems operate in silos, which forces quality teams to manually reconcile data across fragmented platforms.

Review by Exception is a quality assurance strategy where reviewers only examine data that deviates from predefined standards. Instead of line-by-line audits of clean data, the system flags anomalies, allowing QA teams to focus their expertise on high-risk events.

Why Manual Review is Riskier Than You Think

Trusting a purely manual process involves several hidden risks that are difficult to defend under modern regulatory scrutiny:

- The Limit of Human Attention: Expecting a reviewer to maintain 100% accuracy across a 500-page batch record with hundreds of parameters is statistically unlikely.

- The “Invisible” Missing Data: Human eyes are naturally better at seeing incorrect values than finding what isn’t there, such as a missing signature or a blank field.

- Mathematical Human Error: Simple calculations on the shop floor are frequently done incorrectly and can easily be overlooked during a manual audit.

- Unstructured Data Blindness: Margin notes, strikeouts, and informal corrections contain critical context. During a long shift, these nuances are easily missed by human reviewers.

How AI Makes Review by Exception a Reality

The Mareana platform transforms paper-based data into a digital intelligence hub using three core pillars:

- AI-OCR for Actionable Data: Mareana digitizes everything from handwritten signatures to complex tables. The system uses “confidence scoring” to flag low-confidence snippets, ensuring that only data requiring a “human-in-the-loop” verification is surfaced.

- Automated Rule Verification: The platform performs real-time math verifications across multiple pages and checks values against the Master Batch Record (MBR). Correct calculations and within-range values are marked green, allowing the reviewer to skip redundant checks.

- AI Agents for Unstructured Exceptions: Specialized AI Agents detect “messy” data like margin notes. If a note says “Hold time extended by 15 minutes,” the system doesn’t just read it; it cross-references the SOP to verify if that extension is within the validated threshold. It then flags the note as a “Reviewed Exception” for final QA confirmation.

By implementing RbE, pharma manufacturers move from “looking for needles in haystacks” to an exception-only workflow that reduces total review time by up to 70%.

Learn how Review by Exception helps QA teams reduce manual review effort and focus on high-risk events.

Download: A Quality Leader’s Guide to Review by Exception

4. Paper Batch Record Digitization: Unlocking “Dark Data”

Despite the push for digitization, a significant portion of pharmaceutical manufacturing data remains locked in paper batch records. This “dark data” is physically present but analytically inaccessible, creating a massive operational burden for Quality and Operations teams. AI-powered digitization transforms these unstructured documents into structured, queryable data.

The Shift from Manual Entry to AI-Assisted Extraction

Manual digitization is notoriously slow and introduces a high risk of transcription errors. AI changes this workflow through several key mechanisms:

Foundation for Manufacturing Intelligence: Digitized records serve as the fuel for Genealogy and Review by Exception. By making paper data “liquid,” manufacturers can apply advanced analytics to legacy products, ensuring they benefit from the same insights as modern, digitally native processes.

High-Accuracy Handwriting Extraction: Pharma-specific AI-OCR engines extract data from handwritten entries, margin notes, and complex tables. This reduces the time required for data extraction by up to 90% compared to manual methods.

Confidence Scoring and Human-in-the-Loop: AI provides a confidence score for every digitized field. High-confidence data can be accepted in bulk without the risk on inaccuracy, while low-confidence “snippets” are flagged for human review. This ensures that any potential digitization errors are caught by a subject matter expert, fulfilling regulatory requirements for human oversight in GxP environments.

Source-to-Data Traceability: Every digitized data point remains linked to a high-resolution snippet of the original paper record. This provides the “single source of truth” required for GxP compliance and allows inspectors to verify digital records against the original physical source instantly.

Immediate Knowledge Access: Digitizing records allows teams to perform cross-batch trend analysis and historical searches in seconds. Instead of searching through physical filing cabinets, QA leaders use natural language queries to retrieve specific values across years of production history.

Learn more about paper batch record digitization in this blog : How to digitize paper batch records with Mareana

5. AI-Powered Supply Chain Management and Demand Forecasting

Drug shortages are among the most consequential failures in pharmaceutical operations, consequences that extend from revenue loss and supply penalties to patient harm. In early 2024, the United States reported over 323 active drug shortages. The root causes are well understood: demand volatility, single-source dependencies, extended lead times, and the inability to detect supply risk signals early enough to act before stockouts materialize.

AI supply chain management addresses these causes directly, transforming pharmaceutical supply chains from reactive systems that respond to disruptions into predictive networks that anticipate them.

Demand Forecasting with External Signal Integration

Conventional pharmaceutical demand forecasting relies primarily on historical sales data and planned production schedules. This approach performs adequately in stable market conditions and fails precisely when conditions change – during disease outbreaks, regulatory actions affecting competitors, seasonal demand spikes, or supply disruptions at key API manufacturers.

AI demand models improve forecast accuracy by integrating external signals alongside internal data:

- Hospital admission rates and disease surveillance data for infectious disease products

- Regulatory action databases tracking competitor product withdrawals or supply disruptions

- Supplier financial health indicators and geopolitical risk signals for raw material sourcing

- Prescription trend data and payer formulary changes for commercial products

By correlating these signals with historical demand patterns, AI models detect demand shifts weeks earlier than traditional forecasting, providing the planning window that procurement and manufacturing scheduling need to respond effectively. Organizations implementing AI demand forecasting report a 20–30% reduction in forecast error and, in oncology applications, an 80% reduction in critical stockouts.

Multi-Echelon Inventory Optimization

For pharmaceutical companies with multi-CDMO manufacturing networks, AI supply chain management extends beyond demand forecasting into network-level inventory optimization. The manufacturing knowledge graph provides visibility into inventory positions across the network – finished goods at distribution centers, drug product at CDMOs awaiting release, drug substance in transit, and raw material inventory at manufacturing partners.

AI optimization models use this connected inventory picture to make dynamic recommendations: when to pull forward a production campaign, how to allocate available drug substance across competing product demand, and when safety stock levels at a specific node in the network warrant replenishment ahead of the standard schedule.

Sponsor-CDMO Supply Coordination

For asset-light pharmaceutical companies whose manufacturing is entirely outsourced, AI supply chain tools provide the visibility into CDMO operations that traditional oversight models cannot. By connecting CDMO manufacturing data – batch completion status, yield trends, deviation activity to the sponsor’s supply planning models, AI enables proactive supply risk identification rather than reactive response to CDMO-reported delays.

When a CDMO’s yield begins trending downward three batches before it would breach a specification limit, a sponsor with connected manufacturing intelligence can begin contingency planning – alternative sourcing, demand prioritization, customer communication before a supply impact materializes.

6. Smart Continuous Process Verification (CPV): From Monitoring to Prediction

Traditional CPV programs generate charts. Smart CPV programs generate decisions.

The difference is not the data; most manufacturing sites already capture enormous volumes of process parameter data through historians, MES, and LIMS. The difference is what happens to that data after it is captured. In a conventional CPV program, a statistician or process engineer reviews charts periodically, identifies trends manually, and escalates concerns through a review cycle that may take weeks to complete. By the time a drift is confirmed and acted upon, the process has already moved further out of its historical range.

What This Enables Operationally

- Earlier intervention: Out-of-trend conditions are detected an average of three batches earlier than traditional manual monitoring, allowing engineering and quality teams to investigate and correct before a deviation occurs

- Real-time release readiness: Continuous parameter monitoring throughout the batch lifecycle builds the quality data record in parallel with manufacturing, supporting the move toward real-time or parametric release

- Proactive CAPA: When CPV detects a drift pattern, the system can cross-reference historical deviations and investigations connected to similar patterns, providing context for root cause analysis before a formal deviation is even opened

- Regulatory defensibility: Automated CPV records with full audit trail satisfy the data integrity requirements of ICH Q10 and support Annual Product Quality Review generation without manual data assembly

For small and mid-size manufacturers without dedicated statisticians, Smart CPV effectively extends the analytical capacity of the quality team providing the continuous monitoring that regulators expect without requiring proportional headcount.

7. AI-Powered Predictive Maintenance

Unplanned equipment downtime in pharmaceutical manufacturing carries costs that go beyond the lost production hours. A bioreactor’s failure mid-batch does not just pause manufacturing; it destroys the batch. A filling line stoppage during a campaign can trigger an investigation, a deviation, and potentially a supply disruption. In a GMP environment, equipment availability is directly linked to product availability.

Traditional maintenance programs operate on two models. Time-based maintenance schedules preventive work at fixed intervals, regardless of the actual condition of the equipment, leading to maintenance performed too early when equipment is fine and sometimes too late when failure modes do not follow predictable timelines. Reactive maintenance waits for failure, accepting downtime and batch loss as an operational cost.

Predictive maintenance, enabled by AI analysis of equipment sensor data and operational history, offers a third model: maintenance performed when equipment condition actually warrants it, based on real signals rather than fixed schedules or failure events.

How AI Predictive Maintenance Works in a GMP Environment

Pharmaceutical manufacturing equipment like bioreactors, chromatography systems, homogenizers, filling lines, autoclaves – generates continuous operational data through sensors and historians. Temperature behavior, vibration signatures, pressure profiles, motor current draw, and flow rates all carry information about equipment condition. Individually, these signals are difficult to interpret as maintenance indicators. In combination, as patterns over time, they reveal the early signatures of developing failure modes.

Mareana connects to equipment historian data through the manufacturing knowledge graph, linking equipment operational signals to the batch records of every run that equipment has performed. This connection is critical: it allows the AI to correlate subtle equipment behavior changes with downstream process outcomes, identifying not just that a piece of equipment is showing early signs of wear but that similar equipment behavior in the past was associated with increased process variability or batch failures.

Organizations implementing AI predictive maintenance report a 50% reduction in unplanned downtime – a figure that translates directly to improved manufacturing schedule adherence and reduced deviation burden.

8. Trends and SPC Charts: Automated Statistical Process Control

Statistical Process Control has been a manufacturing quality tool for decades. In pharmaceutical manufacturing, it is increasingly a regulatory expectation – ICH Q10 and the FDA’s Process Validation guidance both call for continued process verification programs that demonstrate ongoing process consistency through statistical monitoring.

The gap between what SPC can deliver and what most manufacturers actually achieve with it comes down to implementation. Building and maintaining SPC charts for a commercial pharmaceutical product requires selecting the appropriate chart type for each parameter, establishing statistically valid control limits, updating charts as new batches are completed, and reviewing them frequently enough to detect trends before they become deviations. For a product with dozens of monitored parameters across multiple process steps, this is a substantial ongoing analytical burden.

AI-Automated Chart Generation and Monitoring

Mareana’s Charts module automates the construction and maintenance of SPC charts directly from the manufacturing knowledge graph. As batch data is captured from MES, LIMS, or digitized paper records.

The AI layer adds two capabilities beyond conventional SPC:

Multivariate trend detection: Rather than monitoring each parameter independently, the system identifies correlated parameter groups and detects shifts in multivariate space, catching process drift that would be invisible in any single-variable chart.

Natural language summarization: Through integration with Mareana’s Neptune AI co-pilot, quality teams can query chart data in plain language, without navigating chart interfaces or building custom queries.

Regulatory Alignment

Automated SPC charts with full audit trail, version control, and documented statistical methodology satisfy the data integrity and CPV program requirements of ICH Q10, EU GMP Annex 11, and FDA Process Validation guidance. The automated generation of chart packages for Annual Product Quality Reviews eliminates weeks of manual data compilation.

9. Generative AI for Regulatory Documentation and Electronic Batch Records

Regulatory documentation is one of the most resource-intensive activities in pharmaceutical quality operations and one of the least analytically valuable ways for skilled professionals to spend their time. Writing batch manufacturing records, compiling annual product quality reviews, drafting deviation reports, and preparing submission documents all require access to the same manufacturing data that the rest of the quality system uses, assembled into specific formats for specific regulatory purposes.

Generative AI does not replace the regulatory expertise required to produce these documents correctly. It removes the data assembly burden that prevents that expertise from being applied where it matters most.

Electronic Batch Records Generated from Connected Manufacturing Data

Traditional electronic batch records are digital forms; they replace the paper format without changing the underlying process of manual entry and manual review. The operator still enters data, field by field. The reviewer still reads page by page. The value of electronic capture is primarily in storage and retrieval, not in the intelligence applied to the data.

Mareana’s approach begins from the manufacturing knowledge graph rather than from a blank form. As a batch is executed, process parameters, in-process results, material consumptions, equipment usage, and operator sign-offs are captured across connected systems and consolidated into the batch record automatically. The eBR is assembled from verified manufacturing data that already exists in the connected network.

Generative AI then applies two additional capabilities:

Automated narrative generation: Deviation descriptions, investigation summaries, and batch record narrative sections are drafted by AI from the underlying structured data. The quality reviewer edits and approves rather than writes from scratch, reducing documentation time while maintaining the expert judgment that regulatory submissions require.

Specification and SOP cross-referencing: When a parameter value is entered or extracted, the AI automatically cross-references it against the Master Batch Record specification and flags any discrepancy before the reviewer reaches that section. Missing signatures, blank mandatory fields, and out-of-specification entries are identified at data capture, not during final review.

Regulatory Submission Support

For regulatory affairs teams managing CMC submissions, generative AI can compile process data summaries, comparative batch analyses, and CPV data packages from the manufacturing knowledge graph, reducing the manual effort required to prepare Module 3 documentation and post-approval change supplements.

Validation and Governance Considerations

Generative AI in GxP document generation requires a clear governance framework. Mareana’s approach separates validated, deterministic AI agents which perform data retrieval, calculation, and specification checking with fully traceable outputs from generative narrative AI, which operates as a drafting assistant subject to mandatory human review. This architecture satisfies the FDA’s CSA framework and the emerging requirements of EU GMP Draft Annex 22 by ensuring that every data point in a regulatory document is traceable to a validated source.

Strategic Recommendations for Pharma Manufacturers

Small and mid-size pharma companies must determine how to adopt AI in a compliant and ROI-positive way with limited resources.

- Prioritize Data Connectivity over Collection: Focus on building a “Manufacturing Intelligence” layer that breaks down silos between MES, LIMS, and ERP before deploying complex models.

- Adopt CSA Thinking: Update internal Standard Operating Procedures (SOPs) to reflect the FDA’s risk-based Computer Software Assurance framework.

- Manage the Model Lifecycle: Establish formal change control processes for retraining models and monitoring “model drift”—the performance degradation that occurs as process conditions change.

- Evaluate CDMO Capabilities: For asset-light organizations, the ability of a CDMO to share AI-ready data is a critical factor in vendor selection. Seek CDMO-agnostic platforms that can ingest paper or PDFs to achieve visibility without changing shop-floor workflows.

The technology for AI in pharma manufacturing is mature. Success over the next 24 months depends on the quality of an organization’s data foundations and the development of “trilingual” talent proficient in data science, life sciences, and GMP requirements.

The Future of AI in Pharmaceutical Manufacturing

The AI capabilities deployed in pharmaceutical manufacturing today represent a ceiling. The trajectory over the next three to five years points toward a manufacturing environment where AI moves from supporting human decisions to anticipating the conditions under which those decisions will be needed and from individual use case deployment to a unified manufacturing intelligence fabric that connects quality, operations, supply chain, and regulatory functions into a single decision-support environment.

Agentic AI: From Analysis to Autonomous Action

The most significant near-term shift in pharmaceutical manufacturing AI is the move from analytical tools systems that surface information for human decision-making to agentic systems that can initiate multi-step workflows autonomously within defined boundaries.

Agentic AI in manufacturing will not operate without human oversight. The FDA’s April 2026 Warning Letter specifically citing a manufacturer for deploying AI agents in quality decisions without adequate human oversight has made clear that the regulatory expectation is human-in-the-loop governance for quality-critical activities. But within that governance framework, agentic AI can dramatically accelerate the workflows that surround human decisions.

An agent monitoring CPV data could, upon detecting an out-of-trend signal, automatically initiate a deviation record, pull the relevant historical batches from the knowledge graph, generate a preliminary investigation summary, and route it to the appropriate quality reviewer, all before a human reviewer has opened their inbox. The human decision remains human. The preparation for that decision is automated.

Real-Time Release and Parametric Release at Scale

ICH Q8, Q9, and Q10 collectively envision a manufacturing paradigm where product quality is assured through process understanding and real-time monitoring rather than end-product testing. The regulatory framework for real-time release testing and parametric release exists. What has limited broader adoption is the data infrastructure required to support it – continuous, reliable, integrated parameter monitoring that produces a defensible quality record in parallel with manufacturing.

AI-powered manufacturing intelligence platforms, connected to the full spectrum of process data sources, are building this infrastructure. As connected data environments mature and CPV programs accumulate the process performance history required for regulatory confidence, real-time release will become operationally viable for a broader range of products and manufacturing sites.

Federated Learning Across Manufacturing Networks

One of the limitations of AI models in pharmaceutical manufacturing is the data volume required to train models that generalize reliably across process conditions. A single manufacturing site, running a single product, may not produce enough batch history to build statistically robust predictive models for yield optimization or failure prediction.

Federated learning, an AI architecture in which models are trained across multiple data sources without centralizing the underlying data – offers a path to larger effective training datasets while preserving the data governance boundaries that pharmaceutical companies require. A network of CDMO and sponsor sites contributing to a federated model could produce yield prediction or deviation detection capabilities that no individual site could build from their own batch history alone, without any site sharing proprietary process data with others.

Regulatory frameworks for federated AI in pharmaceutical manufacturing are still emerging, but the technical capability exists and early pilots are underway in adjacent regulated industries.

AI-Native Regulatory Submissions

The FDA’s deployment of Project Elsa and the movement toward real-time regulatory monitoring signal a future in which the interaction between pharmaceutical manufacturers and regulatory agencies becomes more continuous and more data-driven. Regulatory submissions that currently require months of manual data assembly may be replaced by dynamic, connected data packages that regulators can query directly against validated manufacturing databases.

For manufacturers who have built connected manufacturing intelligence platforms, this transition represents a competitive advantage: the data required for regulatory submissions is already assembled, connected, and auditable. For manufacturers who have not, it represents a significant compliance gap that will become increasingly visible as regulatory data expectations evolve.

Digital Twins Across the Full Product Lifecycle

Current digital twin applications in pharmaceutical manufacturing are typically process-specific – a bioreactor model, a lyophilization cycle simulation, a filling line performance model. The next generation of pharmaceutical digital twins will span the full product lifecycle, from molecule design through development, scale-up, commercial manufacturing, and post-market surveillance.

A lifecycle digital twin would connect development batch history to commercial process performance, linking the early design space established in phase one studies to the CPV monitoring that tracks commercial process consistency years later. It would provide technology transfer teams with a quantitative, queryable record of every process parameter explored during development and manufacturing operations teams with a real-time simulation environment for evaluating the impact of proposed process changes before they are implemented.

The knowledge graph architecture that underlies current manufacturing intelligence platforms is the data structure that makes lifecycle digital twins achievable because it preserves the relationships between data points across time, across systems, and across organizational boundaries in a way that supports the longitudinal queries that a lifecycle twin requires.

The Convergence of Quality and Manufacturing Intelligence

Perhaps the most consequential long-term shift in AI-enabled pharmaceutical manufacturing is the dissolution of the boundary between quality systems and manufacturing systems as separate functional domains with separate data architectures.

In the current paradigm, quality data — deviations, CAPAs, change controls, audit findings — lives in QMS platforms that are largely disconnected from the process data in manufacturing and laboratory systems. Quality events are linked to batches through manual cross-referencing. The relationship between a process parameter trend and a quality event pattern is invisible unless someone builds that connection manually.

In a connected manufacturing intelligence environment, quality and process data are nodes in the same graph. A deviation connects directly to the batch, the process step, the parameter values, the raw material lots, and the equipment state at the time it occurred. A CAPA connects to the root cause investigation that generated it and to the process monitoring that verifies its effectiveness over subsequent batches.

When quality and manufacturing intelligence converge in this way, the Annual Product Quality Review moves from a retrospective compliance exercise to a continuously updated, real-time view of product and process health. The state of control is visible in the system at all times.

This is the manufacturing environment that the most advanced pharmaceutical organizations are building today. It is where the industry is going.

Learn more

Learn more